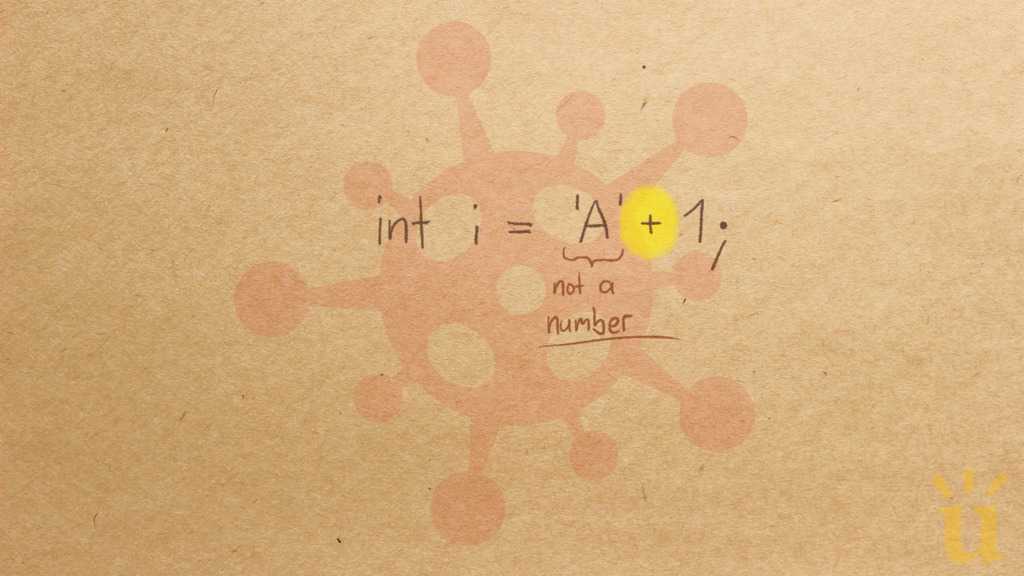

CharNotNumericObserved

Char is not a numeric type

Char is a numeric type

CorrectionHere is what's right.

In Java, values of type char are characters,

but at the same time they can be seen as unsigned 16-bit integer numbers.

'A' + 1 == 'B'ValueHow can you build on this misconception?

The char type in Java provides a leaky abstraction.

It claims to represent an individual character.

At the same time, it lets shine through some of the underlying representation:

charactes are encoded as numbers (in Unicode).

This may be convenient in some situations,

e.g., to use a loop to fill an array with the 26 letters of the English alphabet (for (char c = 'A'; c <= 'Z'; c++)),

to translate between lowercase and uppercase letters (c + 'A' - 'a'),

or to implement a Cesar cipher (c + shift).

These uses seem to be common in educational code snippets

(maybe due to their cleverness),

but they seem less common in programming practice,

because they can be error-prone

(e.g., they easily break for non-English languages).

It might have been a better language design to treat char as just a character type,

and to not imbue it with arithmetic operations.

Thus, a student with this misconception

may simply see Java as a cleaner language than it actually is.